The overloaded server is a dangerous problem for many websites, regardless of the type of hosting, there are some situations that are repeated frequently and that can be resolved from the beginning by following a simple 4-step work to identify and fix bottlenecks that slow down the system that improves overall server performance and avoid regressions.

Some basic steps to do to avoid server overload:-

- Assess : It determine the server’s bottleneck.

- Stabilize : It implement quick fixes to mitigate impact.

- Improve : It augment and optimize server capabilities.

- Monitor : It use the automated tools to help prevent future issues.

Assess to determine the server’s bottleneck –

When traffic overloads a server, one or more of the following can become a bottleneck: CPU, network, memory, or disk I/O.

CPU: CPU usage that is consistently over 80% should be investigate and fix. Server performance often degrades once CPU usage reaches 80-90%, and becomes more pronounced as usage gets closer to 100%.

Network: During periods of heavy traffic, the transmission capacity of the network that serves to meet the demands of users may exceed the limit. Some sites, depending on the hosting provider, may also hit caps regarding cumulative data transfer.

Memory: When a system doesn’t have enough memory, data has to be offload the disk for storage. Disk access is much less rapid than memory access and this can slow down an entire application. If memory becomes completely exhausted, it can result in Out of Memory (OOM) errors. Adjusting memory allocation, fixing memory leaks, and upgrading memory can remove this bottleneck.

Disk I/O: The rate at which data can be read or written from the disk is constrained by the disk itself. If disk I/O is a bottleneck, increasing the amount of data cached in memory can alleviate this issue (at the cost of increased memory utilization). If this doesn’t work, it may be necessary to upgrade your disks.

Stabilize your Server –

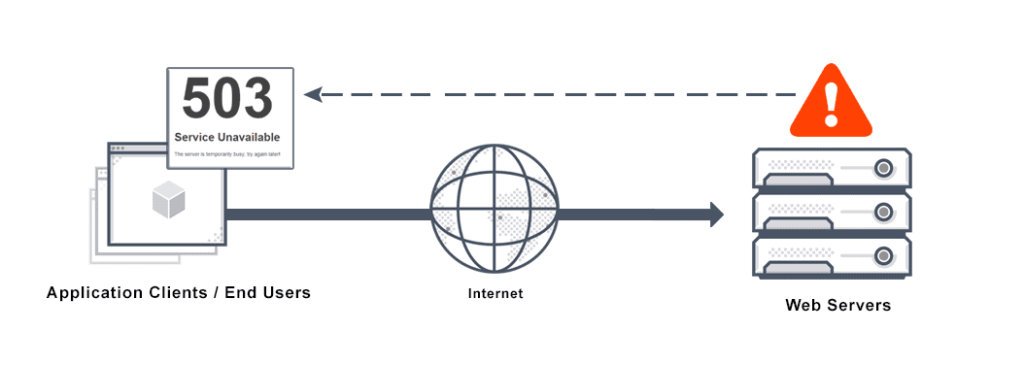

An overloaded server can quickly lead to cascading failures in any other parts of the system. So, it’s important to stabilize the server before attempting to make more significant changes.

Rate limiting protects infrastructure by limiting the number of incoming requests. This is increasingly important as server performance degrades: as response times increase, users tend to aggressively refresh the page – increasing the server load even further.

Rejecting a request is relatively inexpensive, but the best way to protect the server is to manage the rate-limiting upstream, for example through load balancing, an inverse proxy, or a CDN.

Steps of Improvement –

- The static resource service can be downloaded from the server to a content distribution network (CDN), thus reducing the load. The main function of a CDN network is to quickly deliver content to users through an extensive network of servers located in their vicinity, but most CDNs also offer additional performance features such as compression, load balancing and support optimization.

- The decision to resize calculation resources should be taken with care: although it is often necessary, doing so prematurely can generate “unnecessary architectural complexity and financial costs”.

- Text-based resources must be compressed using gzip or brotli, which can reduce the transfer size by about 70%.Compression can be enabled by updating the server configuration.

- For many sites, images represent the greatest load in terms of file size and image optimization can quickly and significantly reduce the size of a site, as we were saying in a past insight.

Server Monitoring Tools –

Server monitoring tools provide data collection, dashboards, and server performance alerts. Their usage can help prevent and mitigate future server performance issues.

Some metrics help to systematically and accurately detect problems; for instance, the server response time (latency) works especially well for this, as it detects a wide variety of problems and correlates directly with the user experience.